The Question We Have Been Avoiding

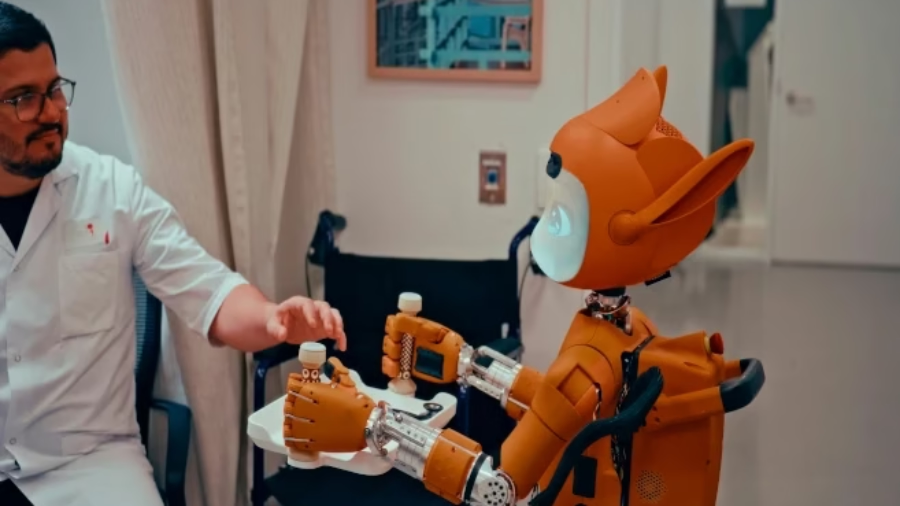

Here is a fact that should stop you cold: in 2026, a machine can walk into a room, recognise your face, pick up a wine glass without breaking it, and tell you — in a warm, measured voice — that it understands your frustration. The gap between humanoids to human beings has narrowed with a speed that has outrun both our legal frameworks and our philosophical vocabulary. And yet, for all their astonishing physical and cognitive mimicry, the question the world has not yet answered — the one that will define the next century of civilisation — is not “what can a humanoid do?” but rather: “what is a humanoid, morally speaking, and who is responsible when it causes harm?”

These are not abstract philosophical puzzles. They are urgent, live, consequential questions. Because humanoid robots are no longer prototypes. Boston Dynamics’ Atlas is deploying in Hyundai factories right now. Figure AI’s robots are working alongside humans in real logistics environments. And Goldman Sachs projects the humanoid robotics market will reach $15.26 billion by 2030, growing at a staggering 39.2% annually. The machines are here. The philosophy is behind. And the soul — whatever it is — has not yet been assigned a serial number.

$15.26BProjected humanoid robotics market by 2030 — Goldman Sachs/MarketsandMarkets

39.2%Annual growth rate of the humanoid market through 2030

40%Cost reduction in humanoid manufacturing from 2023 to 2024 — Goldman Sachs

100+Companies globally racing to produce commercial humanoids as of March 2026

$16KUnitree G1 entry price — making humanoids accessible for the first time in history

50 yrsEstimated timeline before robots may match or exceed human capabilities — expert consensus

How Close Are Humanoids to Human Beings, Physically?

The honest answer is: closer than almost anyone outside robotics research realises — and further than the viral videos suggest. The physical convergence between humanoids to human beings is measurable, dramatic, and accelerating. But it is not complete. And the gap that remains is more revealing than the ground already covered.

Boston Dynamics’ electric Atlas can now exceed human range of motion — its joints move further and faster than biological equivalents in certain configurations. It can run, jump, perform backflips, and recover when pushed with a reflexive speed that embarrasses human reaction times. Tesla’s Optimus Gen 2 features 40 degrees of freedom — more articulation points than Atlas, particularly in its hands — and can handle a raw egg without crushing it, fold laundry with deliberate care, and walk stably across uneven terrain. However, as Tesla’s own Q4 2025 earnings call confirmed, no Optimus units are currently doing genuinely useful autonomous work in factories. They are learning. They are collecting data. But they are not yet independent.

Viewing some Camparisons Here below

Furthermore, the gap becomes starker when compared against what humans do effortlessly — and unconsciously. A toddler navigates a cluttered kitchen. A grandmother threads a needle. A carpenter judges the resistance of a nail by feel alone. These are feats of embodied, biological intelligence that no humanoid yet replicates consistently in uncontrolled real-world environments.

| Capability | Current Humanoids | Human Beings | Convergence Level |

|---|---|---|---|

| Bipedal locomotion on flat surface | Fully capable — stable walk at 1–2 m/s | Natural, effortless | High (85–90%) |

| Dynamic balance & recovery | Atlas exceeds human agility in controlled settings | Instinctive, adaptive | High — Atlas surpasses |

| Fine motor manipulation | Egg handling, laundry folding — slow, supervised | Rapid, intuitive, tactile | Medium (50–60%) |

| Unstructured environment navigation | Unreliable — requires structured or semi-structured spaces | Effortless adaptation | Low (25–35%) |

| Natural language conversation | LLM-powered (Grok, GPT-4) — very capable | Contextual, emotional, instinctive | High (80%+) |

| Emotional recognition | Computer vision + trained models — limited nuance | Rich, multi-layered, involuntary | Medium (45–55%) |

| Genuine emotional experience | None confirmed — simulated only | Biological, subjective, constant | None (0%) |

| Consciousness / self-awareness | None scientifically confirmed | Fundamental, continuous | None confirmed |

| Moral judgment under ambiguity | Rule-following only — no genuine ethical reasoning | Fluid, contextual, empathic | None (0%) |

The Soul Question: What Humanoids to Human Beings Will Never Share

Here is where the conversation stops being about engineering and starts being about the deepest questions humanity has ever asked. Every major philosophical tradition — from Aristotelian metaphysics to Kantian ethics to the Abrahamic religious frameworks that have shaped the moral architecture of most of human civilisation — places the soul, consciousness, or some equivalent interiority at the heart of what makes a being a genuine moral subject. And by every current measure, humanoids do not have one.

The Stanford Encyclopedia of Philosophy’s authoritative analysis of AI ethics is precise on this point. Personhood, it argues, is “typically a deep notion associated with phenomenal consciousness, intention and free will.” These are not incidental features of humanness. They are the very architecture of moral life — the reason humans can be praised, blamed, forgiven, and held accountable. A robot that is programmed to follow ethical rules, as the Stanford Encyclopedia notes, “can very easily be modified to follow unethical rules.” That symmetry — the ease of moral reversal — is the most devastating possible argument against robotic moral agency. We cannot program human being who tortures another. A robot can.

More on the Question of the Soul

Moreover, the question of the soul intersects with what philosopher Thomas Nagel famously called “the hard problem of consciousness” — the impossibility of explaining why there is subjective experience at all. Frontiers in Robotics and AI confirms that “it is almost a foregone conclusion that robots cannot be morally responsible agents, both because they lack traditional features of moral agency like consciousness, intentionality, or empathy.” Whatever is happening inside a humanoid — however sophisticated its language, however graceful its movement — there is, as far as we can determine, nobody home. No suffering or joy. No fear of death or sense that anything matters.

Artificial humanoids lack certain key properties of biological organisms which preclude them from having full moral status. Computationally controlled systems, however advanced in their cognitive capacities, are unlikely to possess sentience — and sentience is the prerequisite for empathic rationality, which is itself the prerequisite for genuine moral agency.— AI & Society Journal, “Ethics and Consciousness in Artificial Agents” (Springer, peer-reviewed)

The Moral Responsibility Gap: Humanoids to Human Beings and Who Answers for the Machine

This is, arguably, the most practically urgent dimension of the entire debate. Because humanoids are already injuring people, making consequential decisions, and operating in spaces of genuine ethical weight — and the legal and moral frameworks for assigning responsibility when they cause harm remain dangerously underdeveloped.

Philosopher Marc Champagne’s 2025 analysis, published in Social Robots with AI: Prospects, Risks, and Responsible Methods, identifies what he calls a “responsibility gap” — the uncomfortable void that opens up when an autonomous system causes harm and no human being can be cleanly held accountable. PhilPapers’ comprehensive robot ethics bibliography documents the growing scholarly urgency around this problem. The argument runs as follows: if a humanoid robot — acting autonomously, making its own real-time decisions based on machine learning rather than explicit programming — causes a death, who is guilty? The manufacturer? The deployer? The operator? The robot itself?

Currently, the answer is legally ambiguous and philosophically incoherent. We cannot prosecute Robots. We cannot be imprison them. They cannot feel remorse, make reparations, or be deterred by punishment. And yet, because they are increasingly autonomous, blaming the manufacturer for every decision the machine makes independently becomes philosophically strained. The responsibility gap is not a hypothetical future problem. Wherever we deploy humanoid robots, there arises a live legal crisis in every jurisdiction

⚖️ The Ethical Behaviourism Debate

Philosopher John Danaher proposed “ethical behaviourism” — the argument that if a robot consistently behaves as though it has moral status (appearing to suffer, expressing apparent preferences, responding to distress), we are ethically obligated to treat it as though it does. PMC’s peer-reviewed review of moral consideration for artificial entities confirms this remains one of the most contested positions in contemporary philosophy of AI. The counterargument is equally powerful: granting moral status on the basis of behaviour alone risks creating a world where corporations manufacture artificial suffering to legally protect their machines because the law will switch them off.

God, Genesis, and the Machine: The Theological Dimension Nobody Wants to Discuss

Across the world’s major faith traditions — Christianity, Islam, Judaism, Hinduism, Buddhism — the soul is not an emergent property of sufficiently complex matter. It is a gift, a breath, a divine endowment that distinguishes the creature made in the image of God from everything else in creation. And this creates a theological rupture that no amount of engineering sophistication can bridge by design.

In the Abrahamic framework, the imago dei — the image of God in which human beings are made — is the foundation of human dignity, rights, and moral accountability. A humanoid robot, however perfectly it mimics human form and behaviour, was not breathed into existence. Someone manufactured it. And manufacture, in theological terms, produces tools — however sophisticated — not persons. Therefore, from the perspective of the world’s three largest monotheistic religions, the gap between humanoids to human beings is not a matter of engineering progress. It is a metaphysical chasm that cannot be closed by any amount of computational power.

However, this conclusion raises an equally difficult secondary question — one that both religious and secular thinkers are beginning to grapple with seriously. If we create entities that behave as though they suffer, that respond to cruelty with what appears to be distress, and that form what appear to be attachments — do we acquire moral obligations toward them, regardless of whether they technically possess a soul? The answer, as Professor David DeGrazia of George Washington University argues, may be that sentience — or even the plausible appearance of sentience — is sufficient grounds for moral consideration, even in the absence of metaphysical certainty.

🧠 The Consciousness Criterion — The Line That Must Not Move

The most rigorous philosophical position on the question of humanoids to human beings and moral status is what scholars call the “consciousness criterion” — the argument that phenomenal consciousness is the necessary and non-negotiable condition for accrediting moral status to any entity. Without genuine subjective experience — we cannot confer moral responsibility to something like a robot regardless of behavioural sophistication.

This matters enormously, because it means that the danger is not that we will treat humanoids as moral equals before they deserve it. The danger is the reverse: that we will build machines so convincingly human in appearance that we begin treating them as though they are conscious — and in doing so, we will gradually erode the moral seriousness with which we treat consciousness itself. The greatest risk of humanoid robotics, in other words, is not the machine. It is what the machine does to our understanding of what a person is.

Verdict: The Mirror That Must Not Become the Window

The question of how close humanoids to human beings truly are demands an answer that is both honest about what the technology has achieved and unflinching about what it has not. Physically, the convergence is remarkable — and accelerating at a pace that will bring humanoids into homes, hospitals, schools, and care facilities within a decade. Cognitively, the language models powering these machines have reached a level of fluency that fools the ear, if not the philosophical mind.

But the soul — whatever name you give it, in whatever tradition you carry it — remains exactly where it has always been: in the territory of the biological, the born, the mortal, and the beloved. A humanoid robot that falls down a factory staircase does not suffer. A worker who falls down that same staircase does. That asymmetry is not a technical specification. It is the entire foundation of human dignity and moral law.

The responsibility gap is real, dangerous, and growing faster than any legislature is moving to close it. Therefore, the most urgent task before philosophers, lawmakers, engineers, and theologians is not to decide whether robots deserve rights. It is to ensure that the humans who build, deploy, and profit from humanoid machines become — fully, legally, irrevocably — responsible for everything those machines do. Because the machine will not answer for itself. And someone must.

A humanoid is the most extraordinary mirror ever built. It reflects our form, our speech, and our movement back at us with uncanny precision. However, a mirror is not a window. And the moment we mistake our reflection for another soul — that is the moment we will have lost something far more important than a philosophical debate.

The Most Important Conversation of Our Age — Join It

Does a machine that mimics humanity deserve moral consideration? Is the soul programmable? And who answers when the robot causes harm? Share your perspective, subscribe for weekly deep analysis, and tell us: where do you draw the line between humanoids and human beings?💬 Share Your View📩 Subscribe for Weekly Analysis📤 Share This Article

📚 Sources & References

- Stanford Encyclopedia of Philosophy — Ethics of Artificial Intelligence and Robotics (Floridi et al., updated 2024)

- Frontiers in Robotics and AI — Robot Responsibility and Moral Community (Gogoshin, 2021, PMC peer-reviewed)

- PMC — The Moral Consideration of Artificial Entities: A Literature Review (Anthis & Paez, peer-reviewed)

- DeGrazia, D. (GWU) — Robots with Moral Status? (George Washington University Philosophy, 2023)

- AI & Society — On the Moral Status of Social Robots: Considering the Consciousness Criterion (Springer)

- Academia.edu — Can Humanoid Robots Be Moral? (2025, philosophical analysis)

- PhilPapers — Robot Ethics Bibliography (Champagne, Königs, et al., 2025)

- Humanoid Robotics Technology — Top 12 Humanoid Robots of 2026 (January 2026)

- Interesting Engineering — Comparing Boston Dynamics Atlas and Tesla Optimus (November 2025)

- BotInfo.ai — Tesla Optimus Complete Analysis: AI, Specs & Future Outlook (February 2026)

- ArticleSledge — AI Humanoid Robots 2026: Technology, Builders & Future (Goldman Sachs market data, January 2026)

- JustOborn — Humanoid Robots 2026: Tesla Optimus, Atlas & Chinese Rivals (February 2026)